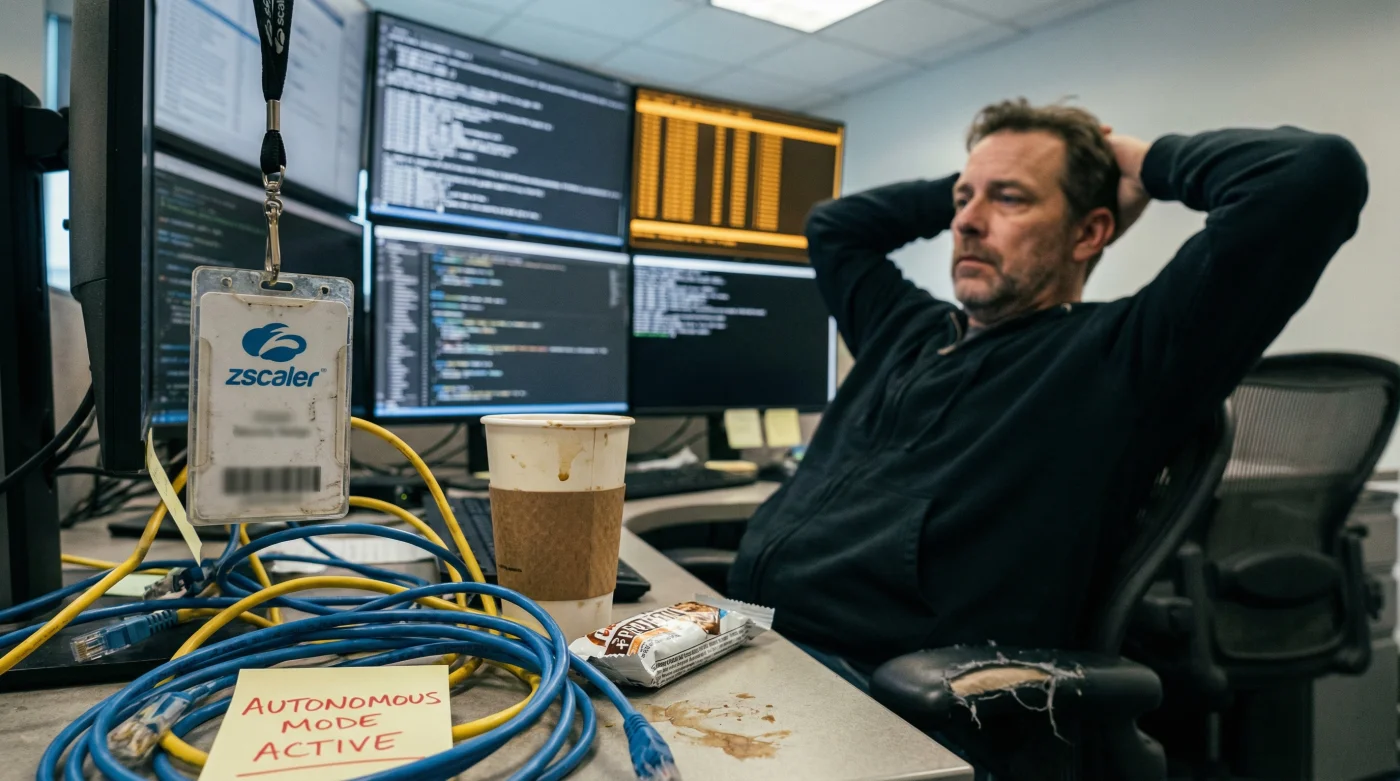

The era of the human cyber defender is quietly drawing to a close, not with a bang, but with the silent, terrifying efficiency of machine-on-machine warfare. For decades, the image of cybersecurity was a weary analyst in a darkened Security Operations Centre (SOC), scanning logs for anomalies. That model is now dangerously obsolete. As artificial intelligence begins to power a new generation of hyper-aggressive hacking tools, the response from industry giants has been swift and radical. Zscaler, a titan in the cloud security space, has officially deployed autonomous agents—digital sentinels designed to fight AI with AI.

This isn’t merely a software update; it is a fundamental institutional shift in how we conceive of digital defence. We are crossing the Rubicon into a landscape where human reaction times—measured in seconds or minutes—are hopelessly inadequate against attacks executing in milliseconds. The deployment of these autonomous agents marks the physical transition to a battlefield where algorithms hunt algorithms, and the role of the human operator shifts from frontline soldier to strategic overseer. The sheer speed of this escalation suggests that the internet is no longer just a network of information, but an active warzone of automated intelligence.

The Deep Dive: The Inevitability of Machine-on-Machine Warfare

The cybersecurity sector has long warned of an impending ‘AI arms race’, but for many UK businesses, this remained a theoretical threat. That changed rapidly with the commoditisation of Large Language Models (LLMs). Bad actors are no longer writing clumsy code or sending typo-ridden phishing emails. They are utilising sophisticated AI to generate polymorphic malware that changes its signature every time it is detected, and to craft social engineering campaigns so culturally precise they can fool even the most vigilant C-suite executive.

Zscaler’s move to deploy autonomous agents is a direct response to this asymmetry. Traditional security stacks are reactive; they wait for a known signature or a breach of policy. Autonomous agents are predictive and adaptive. They inhabit the network like antibodies, identifying threats not just by what they look like, but by their intent and behaviour.

“We are witnessing the end of static defence. You cannot stop a swarm of AI-driven bots with a firewall and a prayer. You need autonomous agents that can out-think and out-manoeuvre the adversary in real-time. It is effectively fighting fire with fire, but on a digital scale we have never seen before.”

Deception and Detection: How the Agents Work

The core of Zscaler’s strategy relies on shifting the advantage back to the defender. Historically, attackers only needed to be right once, while defenders had to be right 100 per cent of the time. Autonomous agents flip this dynamic through advanced deception technologies and predictive analytics.

- Smart Decoys: These agents can spin up fake endpoints, credentials, and data caches that look indistinguishable from high-value assets. When an AI hacker interacts with these decoys, they are instantly flagged, and their tactics are analysed.

- Behavioural Mapping: Unlike humans who suffer from alert fatigue, autonomous agents can correlate millions of data points across the Zero Trust Exchange to spot subtle deviations in user behaviour that indicate a compromised account.

- Auto-Remediation: Upon detection, these agents don’t just send an email to IT; they can isolate the threat, revoke access tokens, and steer the attacker into a sandbox environment immediately.

The Economics of Defence

- Soundbars outsell complex receiver systems for the first time in history

- Roku disables developer mode access effectively banning third-party app sideloading

- Sony unlocks one hundred twenty hertz refresh rates specifically for console gamers

- Netflix quietly reduces streaming bitrates causing visible grain on 4K televisions

- Running standard power cords behind drywall voids your home fire insurance

The efficiency gains are stark when compared to traditional models:

| Metric | Human-Led SOC | Autonomous AI Agents |

|---|---|---|

| Reaction Time | 15–60 Minutes | < 1 Second |

| Availability | 8-Hour Shifts / Rotas | 24/7/365 Continuous |

| False Positives | High (Alert Fatigue) | Low (Context Aware) |

| Scalability | Linear (Hire more staff) | Infinite (Cloud native) |

The Risks of Autonomy

However, handing over the keys to the kingdom to autonomous code is not without peril. There is a palpable anxiety regarding ‘hallucinations’ or false positives where an AI agent might aggressively lock out legitimate users—potentially grinding business operations to a halt. Zscaler has addressed this by implementing strict guardrails and keeping humans in the loop for high-impact decisions, but the trajectory is clear: the training wheels are coming off.

Furthermore, as these defence agents become standard, we can expect AI hackers to evolve again, likely developing ‘anti-agent’ capabilities designed to poison the data that defence AIs rely on. This is the nature of the beast; the cycle of innovation and circumvention is accelerating.

FAQ: Understanding Autonomous Cybersecurity

Will these autonomous agents replace human security teams?

Not entirely. They are designed to augment human teams by handling the massive volume of data and low-level threats. The goal is to free up human analysts to focus on complex investigations, policy architecture, and strategic resilience rather than staring at logs.

Is it safe to let AI make security decisions without approval?

Most autonomous systems operate on a tiered basis. They are granted authority to block obvious threats (like known malware or impossible travel logins) instantly. However, for more ambiguous situations, they typically flag the issue for human review or apply temporary restrictions rather than a permanent ban.

How does this affect UK GDPR and data privacy?

Zscaler and similar providers operate under strict compliance frameworks. While the AI analyses traffic patterns, it does so largely through metadata and anonymised telemetry. However, as with any AI integration, Data Protection Officers must ensure that the processing of employee behaviour data remains lawful and transparent.

Can AI hackers compromise the autonomous agents?

It is a theoretical possibility. This is known as ‘adversarial AI’, where attackers try to fool the defence model. Security vendors counter this by constantly retraining their models and using federated learning, ensuring that an attack seen in one part of the world inoculates the entire network instantly.